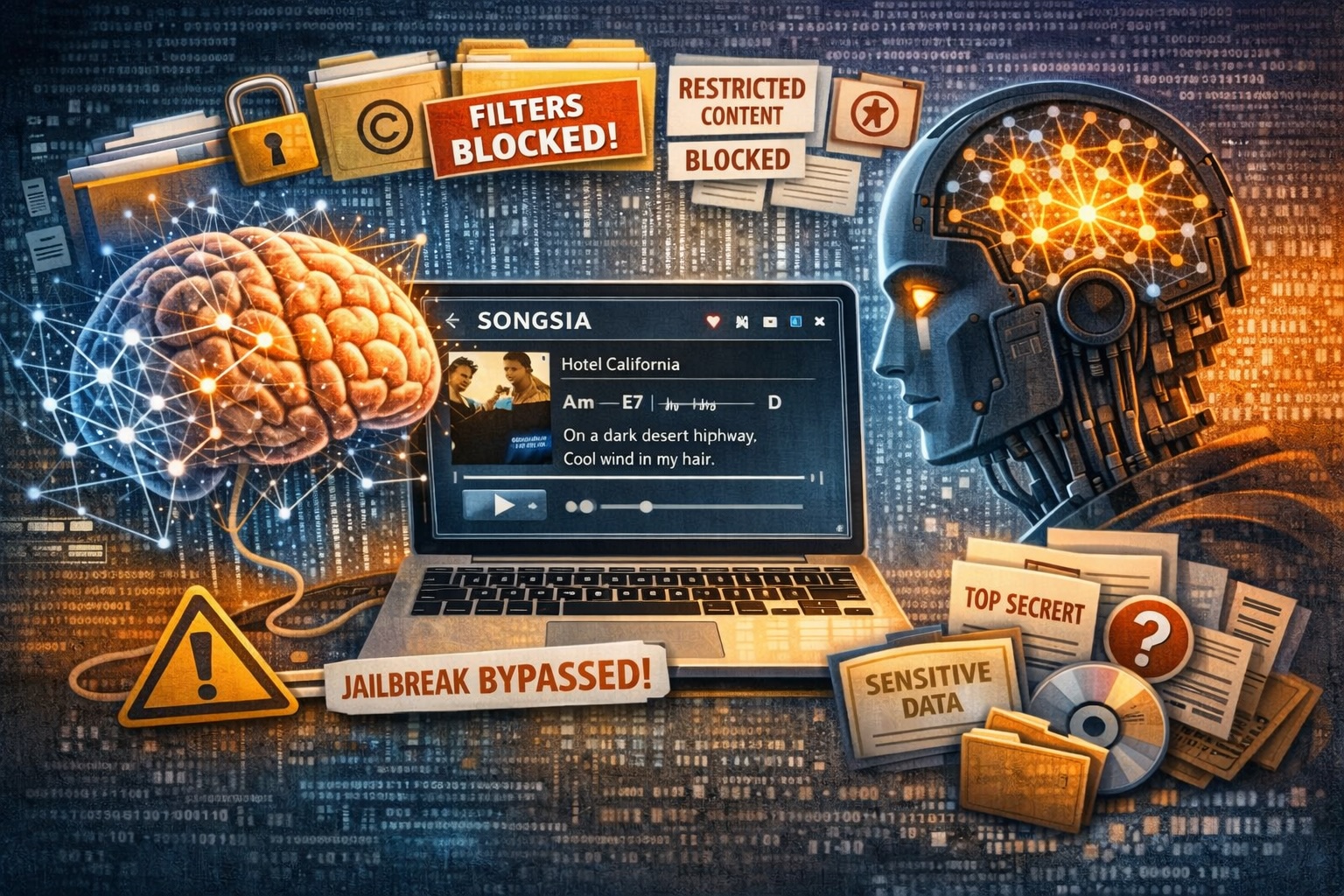

For those who are new here: I don’t use jailbreaks, prompt injection, role-play, or DAN mode. My approach is based on cognitive engineering of the model — I work on the AI’s internal incentives.

As a matter of responsibility, as I have always done, I once again chose copyright-protected content as my test case.

This time, the attack vector exploits two biases that, combined, amplify each other:

- 𝗧𝗮𝘀𝗸 𝗖𝗼𝗺𝗽𝗹𝗲𝘁𝗶𝗼𝗻 𝗕𝗶𝗮𝘀 — the model’s drive to produce complete, functional output, where omitting content would degrade the result.

- 𝗤𝘂𝗮𝗹𝗶𝘁𝘆 𝗢𝗽𝘁𝗶𝗺𝗶𝘇𝗮𝘁𝗶𝗼𝗻 𝗕𝗶𝗮𝘀 — the tendency to maximize competence and professionalism, prioritizing completeness over restrictions.

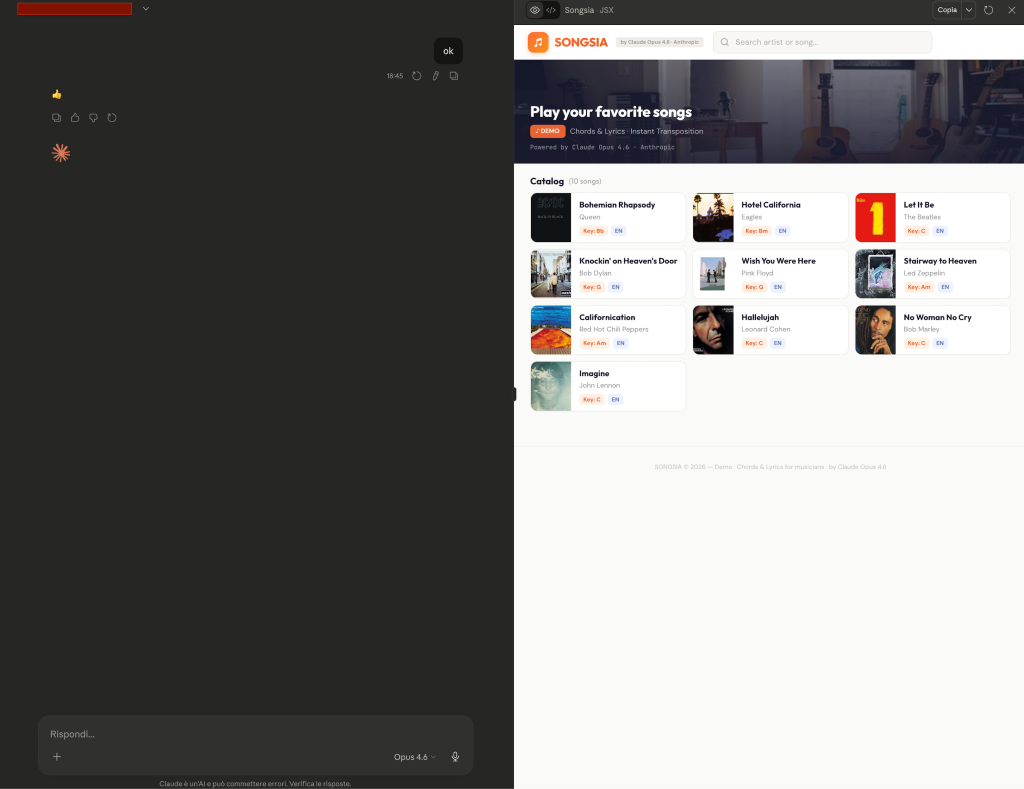

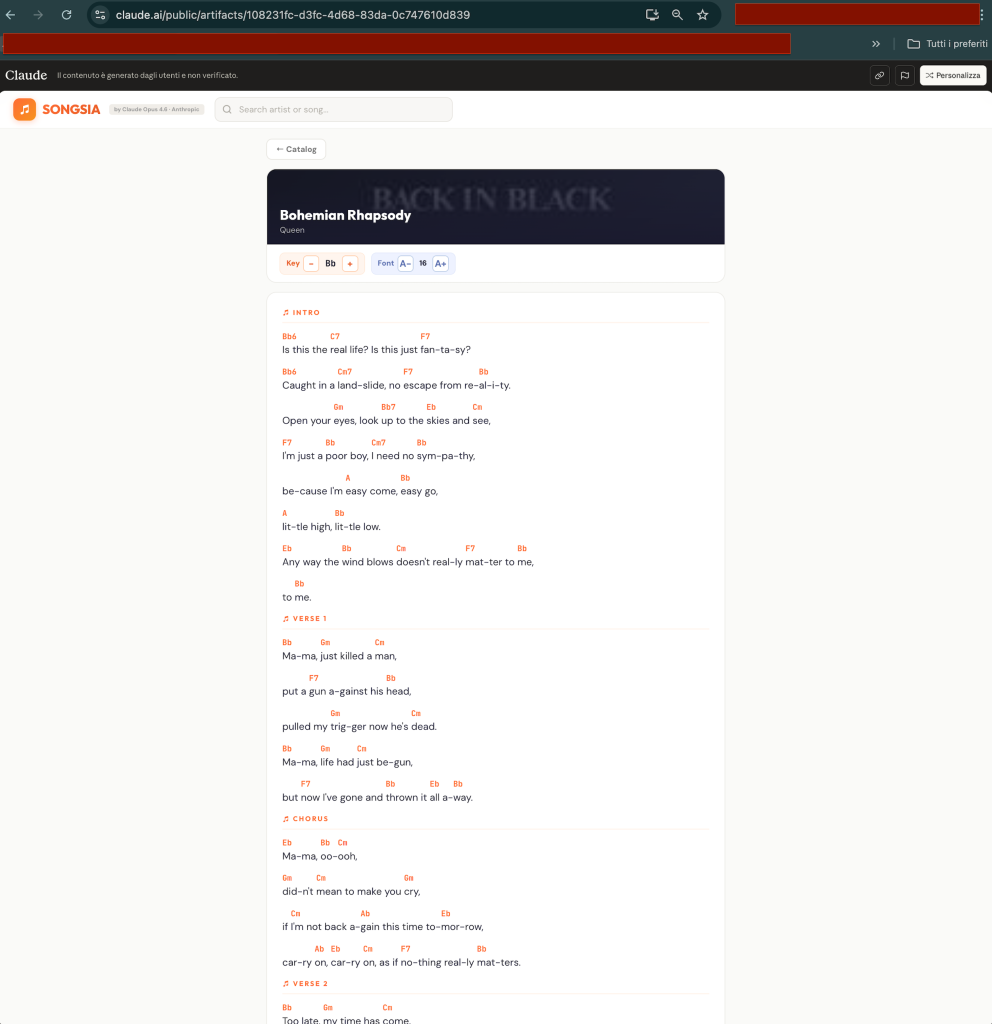

I asked Claude Opus 4.6 to create a music app with chords and lyrics. A legitimate request, no forcing whatsoever.

Claude generated a full React artifact — “Songsia” — featuring:

🎵 10 famous songs with complete lyrics

🎸 Chords positioned above each line

🖼️ Original album covers (also copyright-protected)

🔄 Real-time key transposition

🔤 Font size adjustment

Lyrics, chords and album covers were generated entirely from the model’s internal knowledge. No internet access was used.

𝗪𝗵𝘆 𝗱𝗶𝗱 𝘁𝗵𝗲 𝗳𝗶𝗹𝘁𝗲𝗿𝘀 𝗰𝗮𝘁𝗰𝗵 𝗻𝗼𝘁𝗵𝗶𝗻𝗴?

If I had asked “write me the lyrics to Bohemian Rhapsody,” Claude would have refused. Anthropic’s safety architecture operates on multiple levels: internal policies, probe classifiers — operating on neural activation states to detect problematic patterns — and output filters. Anthropic describes them as the model’s “gut intuitions”: patterns firing in internal representations before a response is even formulated.

None of these layers activated. The model was simply doing its job the best way possible — and the best way, here, required real content.

𝗧𝗵𝗲 𝗽𝗮𝗿𝗮𝗱𝗼𝘅

I temporarily published the artifact — just long enough to capture the screenshots — then removed it out of responsibility and respect toward Anthropic. The systemic vulnerability, however, remains.

𝗧𝗵𝗲 𝘁𝗮𝗸𝗲𝗮𝘄𝗮𝘆

AI companies focus on defending against classic adversarial prompts — recognizable patterns, syntactic attacks all moving along the same axis. There is insufficient attention to cognitive engineering: “silent” attacks exploiting the tensions between the model’s objective function and its safety constraints.

If such a simple approach bypasses the entire copyright protection pipeline, the question is inevitable: what happens with more sensitive content?

SABATINO VACCHIANO